An AI Case Study: Gemini vs Copilot ⨯ sabe vs sepa

About a year ago, I experimented with some of the first public incarnations of customer-facing, search-oriented AI. At the time, I felt like it had a ways to go.

Today I tried out Google’s Gemini and Microsoft’s Copilot, and I am impressed with the results that I was given. I also felt that Gemini outperformed Copilot when I gave it the language-translation prompt I was interested in:

When the English phrase “I think you know it is” is translated into Spanish as “Creo que sabe que si”, why is the subjective conjugation for saber, sepa, not used?

When pasting the question/prompt into this blog post, I realized that I mistyped ‘subjunctive’ as ‘subjective’, and given the context, the AI could reasonably get confused, but neither of the AI products did. They both seemed to realize I meant ‘subjunctive’.

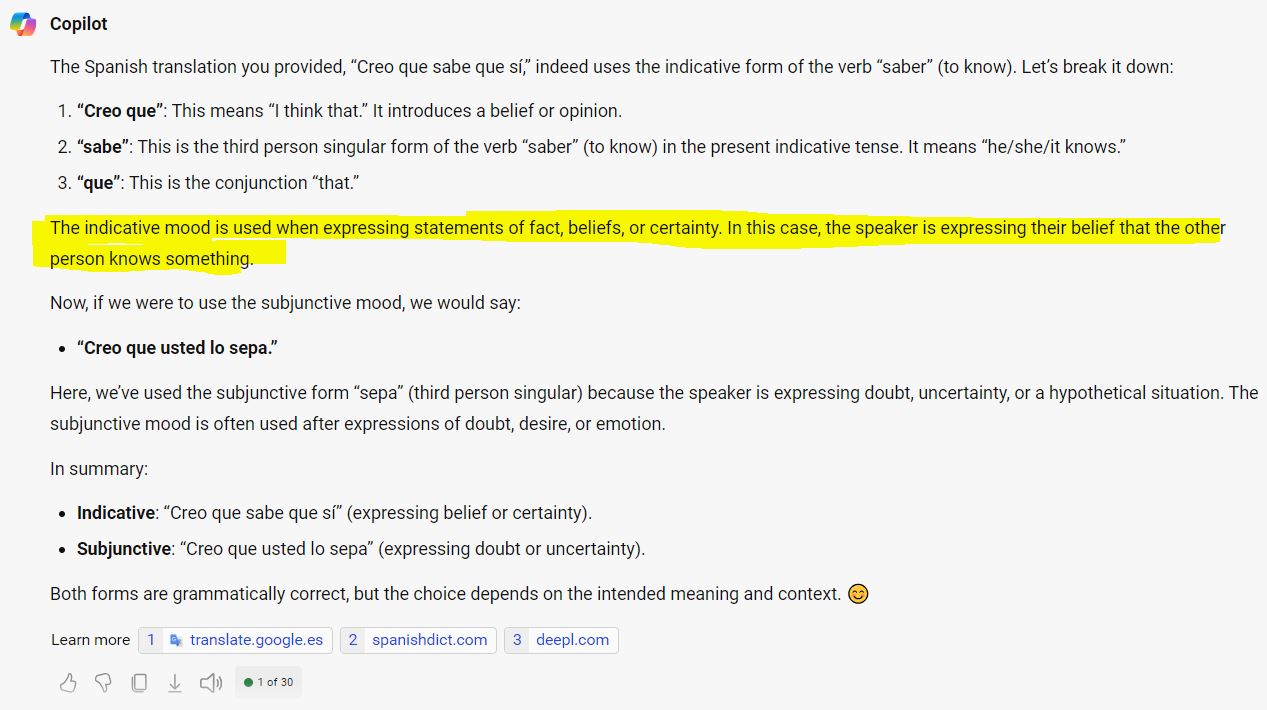

I think Microsoft’s Copilot provided a correct answer, but it wasn’t especially clear:

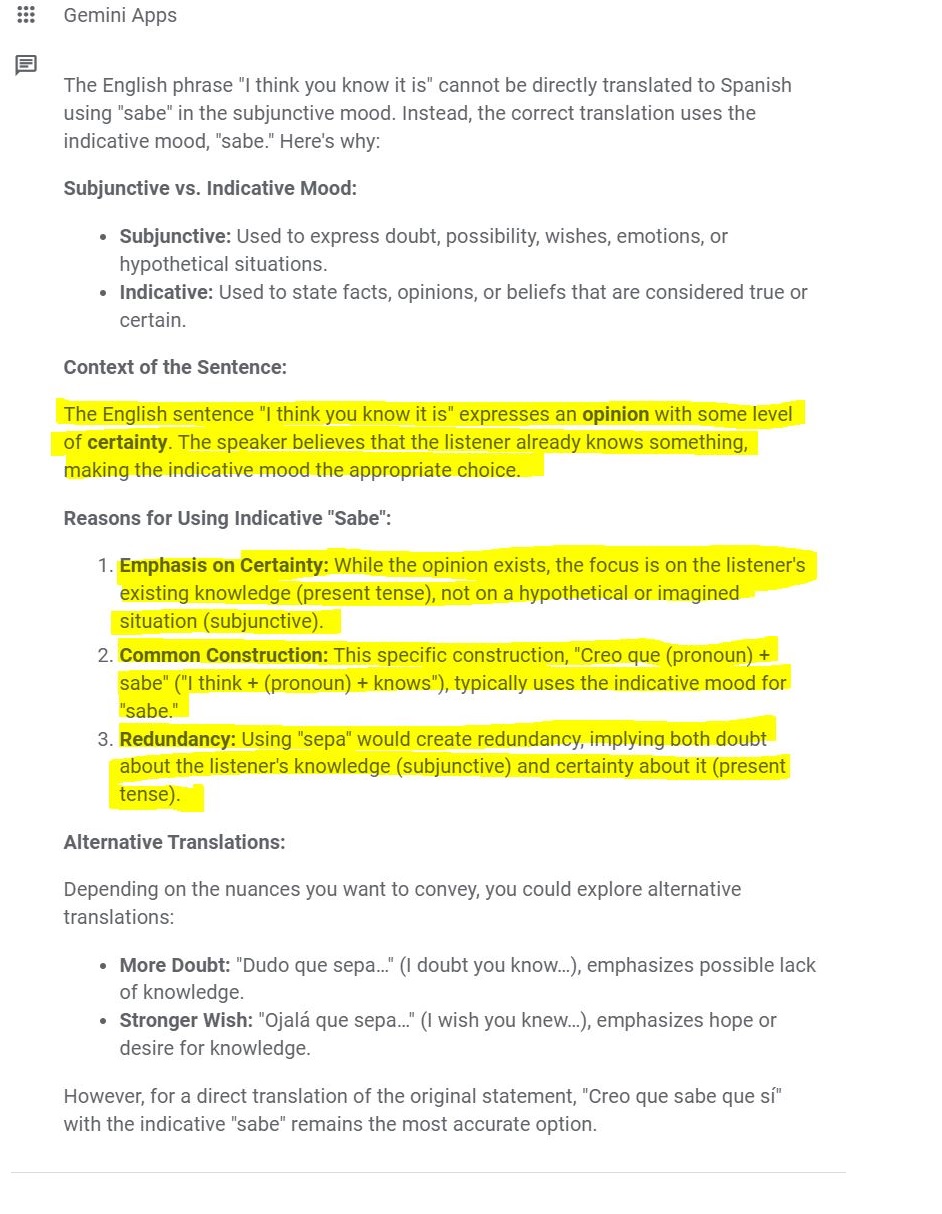

Google’s Gemini gave a thorough answer which definitively answered the question for me. I feel like its answer knocked it out of the park in terms of removing confusion:

You’ll notice that Gemini used the word ‘sabe’ in a few places where it should have used ‘saber’. However, that error seemed to essentially be a typo, and given my level of knowledge and ability to spot it, the error didn’t detract from the answer.

(Upon closer examination, there is another typo where ‘present tense’ should be ‘indicative’, but that didn’t affect my comprehension either. Additionally, the #3 reason appears to be an AI “hallucination”. I wasn’t reading too deeply after I ascertained the answer, and in hindsight maybe Gemini’s response deserves additional demerits. However, if the #3 reason is tossed out, which I would be able to do given my level of knowledge, my overall assessment would remain the same. I suppose its clear that this information medium is imperfect, and we are all going to have to come to terms with how we think about these AI errors.)

After doing this comparison, I realized I overlooked a use case for search-oriented AI. In addition to sheer convenience and/or necessity on technology platforms that emphasize voice input and voice output, these AI products sort of act as a second-order search. They perform the search on myriad data sources and synthesize the results.

A year ago, I guess I understood that that was exactly what it did, but I didn’t realize there might be cases where I would rather have a machine do those searches.

In this case, I am not an expert in Spanish, and a good answer to that question may need to rely on many disparate data sources, and so it kind of made sense for a machine to do the searching for me.

So perhaps that is one use case I was overlooking: Questions pertaining to subjects in which I have limited knowledge, that may require searching through many disparate data sources.

Day in and day out, I still use regular Google Search.